Photo by author

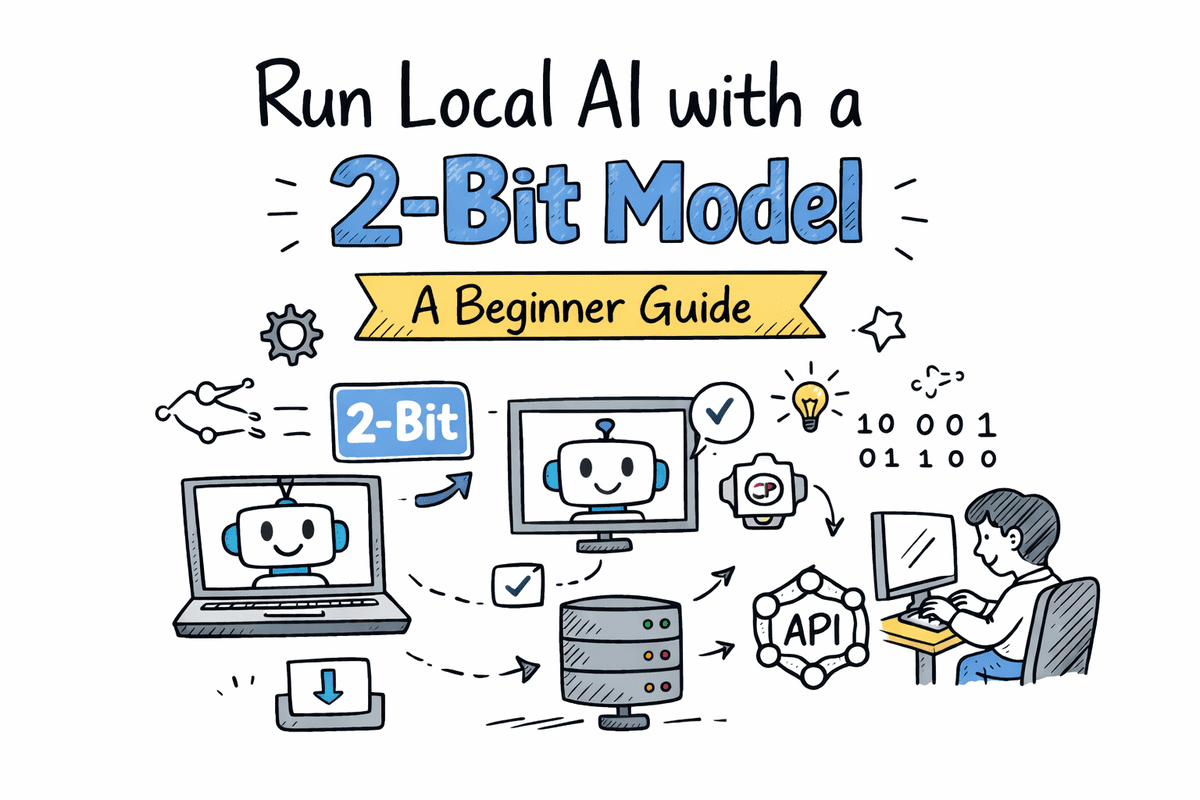

# Introduction

BitNet b1.58, developed by Microsoft researchers, is a local low-bit language model. It is trained from scratch using ternary weights with values of \(-1\), \(0\), and \(+1\). Rather than shrinking a large pre-trained model, BitNet is designed from the outset to run efficiently at very low precision. It reduces memory usage and compute requirements while still maintaining strong performance.

There is an important detail. If you load BitNet using the standard Transformers library, you won’t automatically gain the speed and performance benefits. To fully benefit from its design, you need to use a dedicated C++ implementation called bitnet.cpp, which is specifically suited for these models.

In this tutorial, you will learn how to run BitNet locally. We’ll start by installing the required Linux packages. Then we will clone and build bitnet.cpp from source. Next, we’ll download the 2B parameter bitnet model, run the bitnet as an interactive chat, start the inference server, and connect it to the OpenAI Python SDK.

# Step 1: Installing Required Tools on Linux

Before building BitNet from source, we need to install the basic development tools needed to compile C++ projects.

- to play C++ is the compiler we will use.

- C. Mac Architecture is the system that organizes and organizes the project.

- Gut Allows us to clone a BitNet repository from GitHub.

First, install LLVM (which includes Cling):

bash -c "$(wget -O - Then update your package list and install the required tools:

sudo apt update

sudo apt install clang cmake gitAfter this step is complete, your system is ready to build bitnet.cpp from source.

# Step 2: Cloning and Creating Bitnet from Source

Now that the required tools are installed, we will clone the bitnet repository and build it locally.

First, clone the official repository and go to the project folder:

git clone — recursive

cd BitNetNext, create a Python virtual environment. This isolates dependencies from your system Python.

python -m venv venv

source venv/bin/activateInstall the required Python dependencies:

pip install -r requirements.txtNow we compile the project and generate the 2B parameter model. The following command builds the C++ backend using CMake and sets up the BitNet-b1.58-2B-4T model.

python setup_env.py -md models/BitNet-b1.58-2B-4T -q i2_sIf you have a compilation problem related to int8_t * y_col , apply this quick fix. It replaces the pointer type with a const pointer where needed:

sed -i 's/^\(((:space:))*\)int8_t \* y_col/\1const int8_t * y_col/' src/ggml-bitnet-mad.cppAfter this step is successfully completed, BitNet will be created and ready to run locally.

# Step 3: Downloading the lightweight BitNet model

Now we will download the lightweight 2B parameter bitnet model in GGUF format. This format is suitable for local approximation with bitnet.cpp.

The BitNet repository provides a supported model shortcut using the Hugging Face CLI.

Run the following command:

hf download microsoft/BitNet-b1.58-2B-4T-gguf — local-dir models/BitNet-b1.58-2B-4TThis will download the required model files to the models/BitNet-b1.58-2B-4T directory.

During the download, you can see the output like this:

data_summary_card.md: 3.86kB (00:00, 8.06MB/s)

Download complete. Moving file to models/BitNet-b1.58-2B-4T/data_summary_card.md

ggml-model-i2_s.gguf: 100%|████████████████████████████████████████████████| 1.19G/1.19G (00:11<00:00, 106MB/s)

Download complete. Moving file to models/BitNet-b1.58-2B-4T/ggml-model-i2_s.gguf

Fetching 4 files: 100%|████████████████████████████████████████████████| 4/4 (00:11<00:00, 2.89s/it)After the download is complete, your models directory should look like this:

BitNet/models/BitNet-b1.58-2B-4TYou now have a 2B BitNet model ready for spatial estimation.

# Step 4: Running Bitnet in interactive chat mode on your CPU

Now it’s time to run BitNet in interactive chat mode using your CPU locally.

Use the following command:

python run_inference.py \

-m "models/BitNet-b1.58-2B-4T/ggml-model-i2_s.gguf" \

-p "You are a helpful assistant." \

-cnvWhat it does:

- -m Loads the GGUF model file.

- -p Sets the system prompt.

- -cnv Enables conversational mode.

You can also control performance using these optional flags:

- -t 8 Sets the number of CPU threads.

- -n 128 Specifies the maximum number of new tokens to be generated.

Example with optional flags:

python run_inference.py \

-m "models/BitNet-b1.58-2B-4T/ggml-model-i2_s.gguf" \

-p "You are a helpful assistant." \

-cnv -t 8 -n 128Once running, you will see a simple CLI chat interface. You can type a question and the model will answer directly in your terminal.

For example, we asked who is the richest person in the world? The model responded with a clear and readable answer based on its cognitive cutoff. Although this is a small 2B parameter model running on the CPU, the output is coherent and useful.

At this point, you have a fully functional native AI chat running on your machine.

# Step 5: Starting a Local BitNet Inference Server

Now we will start BitNet as a local inference server. It allows you to access the model through a browser or connect to other applications.

Run the following command:

python run_inference_server.py \

-m models/BitNet-b1.58-2B-4T/ggml-model-i2_s.gguf \

— host 0.0.0.0 \

— port 8080 \

-t 8 \

-c 2048 \

— temperature 0.7What these flags mean:

- -m loads the model file.

- Host 0.0.0.0 makes the server locally accessible.

- -port 8080 Runs the server on port 8080.

- -t 8 Sets the number of CPU threads.

- -c 2048 Specifies the context length.

- Temperature 0.7 Reaction controls creativity.

After the server starts, it will be available on port 8080.

Open your browser and go to http://127.0.0.1:8080. You will see a simple web UI where you can chat with BitNet.

The chat interface is responsive and smooth, even though the model is running natively on the CPU. At this point, you have a fully functional local AI server running on your machine.

# Step 6: Connecting to your BitNet server using the OpenAI Python SDK

Now that your BitNet server is running locally, you can connect to it using the OpenAI Python SDK. This allows you to use your local model as if it were a cloud API.

First, install the OpenAI package:

Next, create a simple Python script:

from openai import OpenAI

client = OpenAI(

base_url="

api_key="not-needed" # many local servers ignore this

)

resp = client.chat.completions.create(

model="bitnet1b",

messages=(

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Explain Neural Networks in simple terms."}

),

temperature=0.7,

max_tokens=200,

)

print(resp.choices(0).message.content)Here’s what’s going on:

- base_url points to your local BitNet server.

- api_key is required by the SDK but is usually ignored by local servers.

- The model must match the model name exposed by your server.

- Messages Describes system and user prompts.

Output:

A neural network is a type of machine learning model that is inspired by the human brain. They are used to recognize patterns in data. Think of them as a group of neurons (like tiny brain cells) that work together to solve a problem or make a prediction.

Imagine you are trying to identify whether a picture shows a cat or a dog. A neural network will take an image as input and process it. Each neuron in the network will analyze a small part of the image, such as a whisker or a tail. They will then pass this information on to other neurons, which will analyze the whole picture.

By sharing and combining information, the network can decide whether a picture is a cat or a dog.

In essence, a neural network is a way for computers to learn from data by mimicking how our brains work. They can recognize patterns and make decisions based on this recognition.

# Concluding Remarks

What I like most about BitNet is the philosophy behind it. This is not just another quantized model. It is built from the ground up to be efficient. This design choice really shows when you see how lightweight and responsive it is, even on modest hardware.

We started with a clean Linux setup and installed the required development tools. From there, we cloned and built bitnet.cpp from source and generated the 2B GGUF model. Once everything was compiled, we ran BitNet directly on the CPU in interactive chat mode. Then we took it a step further by starting a native inference server and finally connecting it to the OpenAI Python SDK.

Abid Ali Awan (@1abidaliawan) is a certified data scientist professional who loves building machine learning models. Currently, he is focusing on content creation and writing technical blogs on machine learning and data science technologies. Abid holds a Master’s degree in Technology Management and a Bachelor’s degree in Telecommunication Engineering. His vision is to create an AI product using graph neural networks for students struggling with mental illness.