Photo by author

# Introduction

For years, artificial intelligence music generation was a complex research domain, limited to papers and prototypes. Today, this technology has entered the consumer limelight. is leading this trend. Google’s MusicFXDJA web-based application that translates text signals into a continuous, controllable stream of music in real time. In this article, we look at MusicFX DJ from a technical perspective, its user-facing features, the technology that powers it, and what its development means for the field of data science.

# What is MusicFX DJ?

Music FX DJ is an experimental, web-based application developed by Google DeepMind In partnership with Google Labs. It represents a significant shift from single-output artificial intelligence music generators to an interactive, performance-based experience. The tool is designed to be accessible, requiring no prior knowledge of music theory or digital audio workstation (DAW) skills.

At its core, MusicFX DJ works like a generative mixing deck. Users can input multiple text prompts like “funky bass line,” “ethereal synth pads,” and “driving hip-hop beat” and layer them simultaneously. The interface provides real-time fader-like controls for parameters such as intensity, “chaos” and density, allowing users to shape the music as it plays. It’s real-time interactivity and high-quality 48 kHz stereo output set it apart from earlier static generation tools.

AI music generation heads to consumers with Google’s MusicFXDJ.

# The technology behind the beat: Liria and real-time diffusion

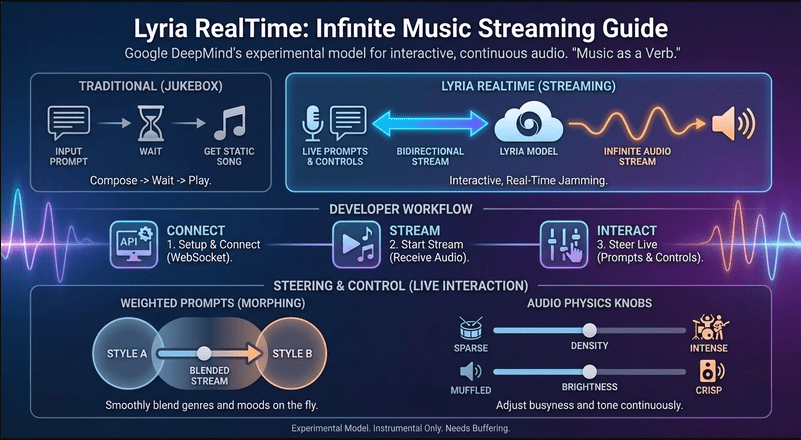

Although Google hasn’t released a full white paper on the specific model of MusicFX DJ, it’s publicly known Leria A family of models, specifically Lyria RealTime. Understanding Lyria provides the key to the tool’s capabilities.

Lyria is Google DeepMind’s latest music generation model. It is built on a diffusion model, which has become the basic model for high-fidelity audio and image generation. Here’s a simple breakdown of how this technology works within MusicFX DJ:

- Training Process: The model is trained on a large dataset of music audio paired with written descriptions. It learns to associate patterns in audio waveforms—melody, harmony, timbre, rhythm—with semantic concepts from text.

- Diffusion Process: Rather than creating music in one step, a diffusion model works through a process of continuous improvement. It starts with pure noise (stable) and gradually “denies” it over several stages, turning it into coherent music that matches the input text prompt.

- Real-Time Adaptation (Liria Realtime): Generates a full clip from a standard Liria model prompt. Lyria modifies this process for RealTime streaming. It potentially generates short, overlapping segments of audio in a continuous loop, while a separate control process dynamically adjusts generation parameters based on real-time user input (prompts, changing sliders). It allows for smooth transitions and live remixing.

- Conditioning and Control: The “magic” of MusicFX DJ’s layering comes from conditioned generation. The model is not conditioned on a single prompt but on a weighted combination of multiple cues. When you adjust the fader for a “funky bass line” you are adjusting the weight of this condition in the model’s generation process, making that element more or less dominant in the output audio stream.

Leria and real-time diffusion

This structure explains the tool’s professional-grade audio quality and its unique interactive feel. It’s not just playing pre-made clips, but generating music on the fly in response to your commands.

# How MusicFX DJ works

Using MusicFX DJ feels less like AI programming and more like conducting an orchestra or DJing a set. The workflow is intuitive:

- Prompt Layering: The first step involves adding ten different text prompts to separate tracks.

- Real-time generation: Upon launch, the tool immediately begins generating a continuous piece of music that includes elements from all active prompts.

- Interactive mixing: Each prompt track has its own volume fader and special controls (for example, “chaos” to add unpredictability, “density” to fill out the sound). Adjusting them in real-time changes the music without interrupting the flow.

- Dynamic Evolution: The music is not on a fixed loop. The machine learning model continuously evolves the texture while respecting the user’s guidance cues and slider positions, introducing variations and making sure it doesn’t repeat itself.

This design philosophy lowers the barrier to creative musical exploration, making it a powerful tool for brainstorming, prototyping song ideas, or simply enjoying the process of guided musical discovery.

# Implications for data scientists and the AI community

The launch of MusicFX DJ is more than just a great demo. It identifies several important trends in applied AI.

- Consumerization of Complex Models: Shows how cutting-edge research – diffusion models, large-scale audio training – can be packaged into intuitive applications. For data scientists, this highlights the importance of user experience (UX) design and real-time systems thinking to bring artificial intelligence to a wider audience.

- Real-time controllable generation: Moving from batch inference to real-time, interactive generation is a major technical challenge. MusicFX DJ shows that this is now possible for high-dimensional data such as audio. It paves the way for similar interactive artificial intelligence in video, 3D design, and beyond.

- APIs and decentralization of capabilities: Google has made the underlying Lyria RealTime model available through an application programming interface (API), initially Gemini API and AI Studio. It allows developers and data scientists to build their applications on top of this powerful music generation engine, encouraging innovation in gaming, content creation, and interactive media.

- Ethical and Creative Considerations: This tool also brings important questions to center stage. How are training datasets collected and managed? What are the copyright implications of AI-generated music? How do we ensure artists are compensated? Like collaborating with musicians Jacob Collier During development, Google highlighted a path where artificial intelligence augments rather than replaces human creativity.

# The result

Google’s MusicFX DJ is a landmark application that successfully bridges the gap between cutting-edge artificial intelligence research and user-friendly creativity. Using the Lyria RealTime diffusion model, it provides a unique, interactive music generation experience that feels powerful and lively.

For data scientists, it serves as a compelling case study in real-time artificial intelligence system design, model conditioning, and commercialization of generative technology. As the underlying models become accessible via API, we can expect a wave of new applications that further blur the line between human- and machine-assisted art. The age of interactive, generative media is not in the future. It’s here, and tools like MusicFX DJ are leading the way.

// References

Shatu Olomide A software engineer and technical writer with a knack for simplifying complex concepts and a keen eye for detail, passionate about leveraging modern technology to craft compelling narratives. You can also search on Shittu. Twitter.